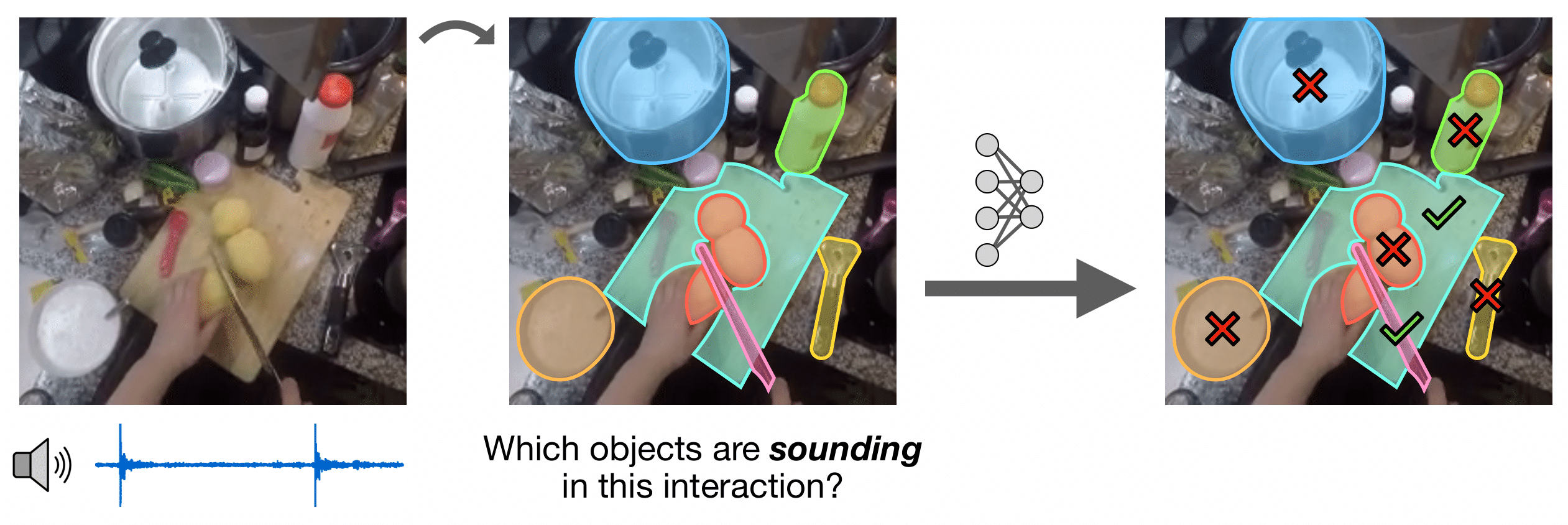

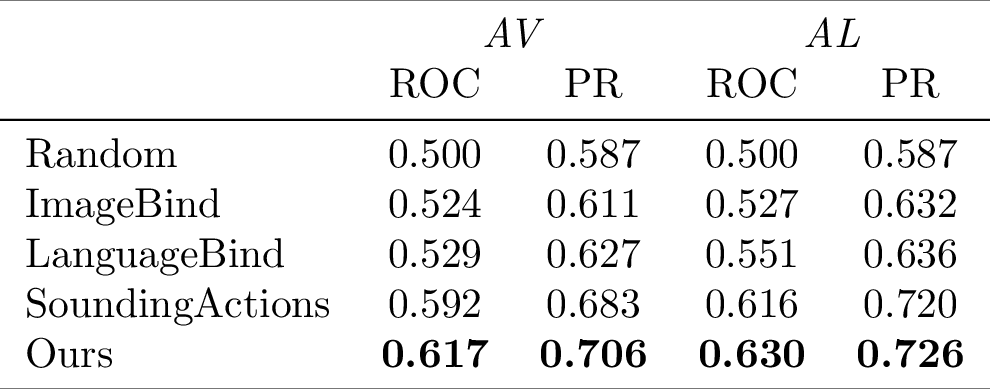

Can a model distinguish between the sound of a spoon hitting a hardwood floor versus a carpeted one? Everyday object interactions produce sounds unique to the objects involved. We introduce the sounding object detection task to evaluate a model's ability to link these sounds to the objects directly involved. Inspired by human perception, our multimodal object-aware framework learns from in-the-wild egocentric videos. To encourage an object-centric approach, we first develop an automatic pipeline to compute segmentation masks of the objects involved to guide the model's focus during training towards the most informative regions of the interaction. A slot attention visual encoder is used to further enforce an object prior. We demonstrate state of the art performance on our new task along with existing multimodal action understanding tasks.

@inproceedings{yang2025clink,

title = {Clink! Chop! Thud! -- Learning Object Sounds from Real-World Interactions},

author = {Mengyu Yang and Yiming Chen and Haozheng Pei and Siddhant Agarwal and Arun Balajee Vasudevan and James Hays},

year = {2025},

booktitle = {ICCV},

}